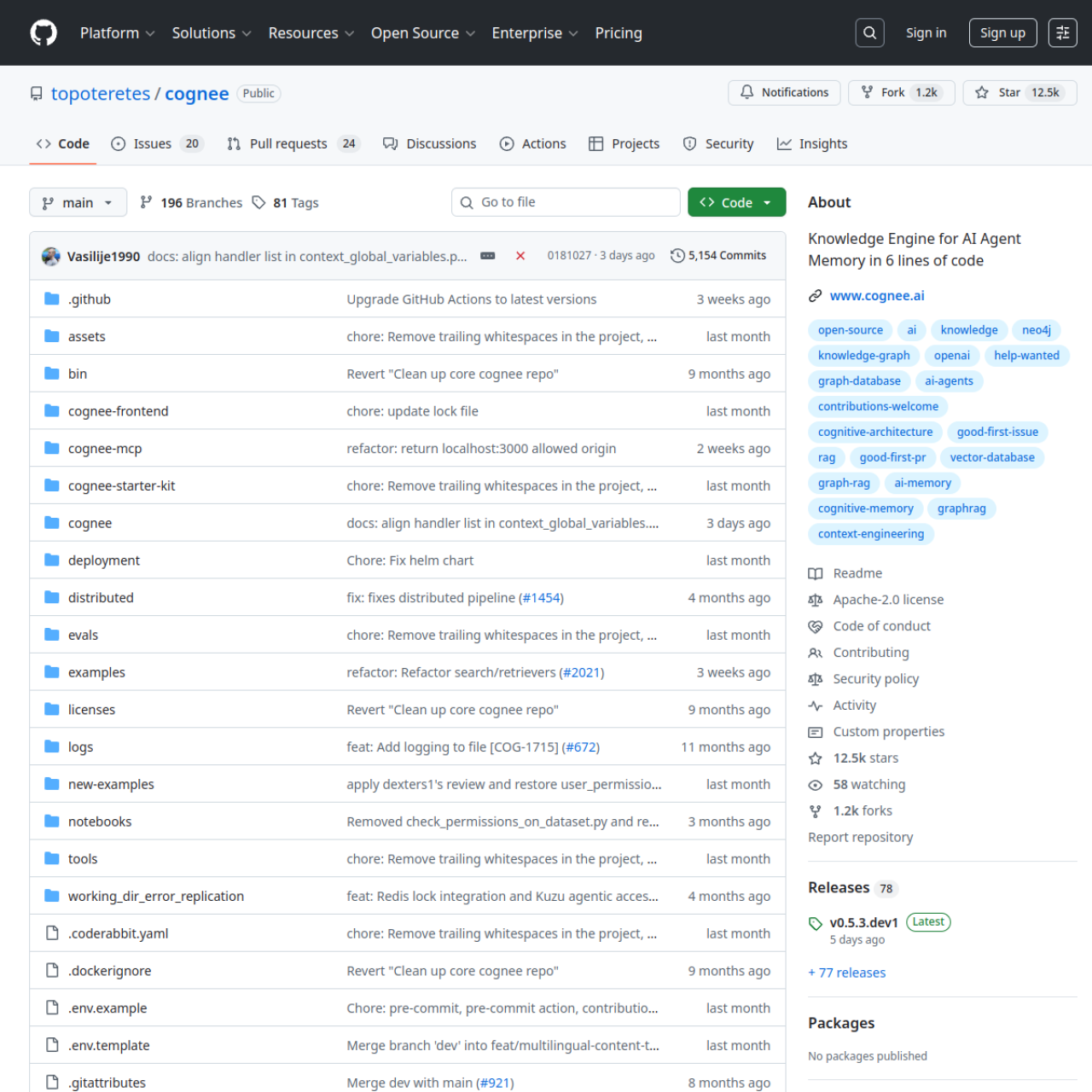

Cognee is built for AI systems that need persistent memory and relationship-aware retrieval, not just flat vector similarity lookups.

Key Features

- Knowledge Graph Construction: Turn raw content into linked entities and relationships.

- Semantic Retrieval Layer: Combine embeddings with contextual retrieval patterns.

- Model Provider Flexibility: Integrate different LLM and embedding stacks.

- MCP Ecosystem Support: Connect with assistant workflows using MCP patterns.

- API-First Design: Ingest, process, and query through service APIs.

- Storage Backends: Support multiple persistence strategies for different use cases.

Why Choose Cognee?

- You are building RAG apps with memory requirements.

- You need better context structure than a plain vector DB.

- You want private data ownership for AI workloads.

Docker Deployment

Cognee is commonly deployed with PostgreSQL/pgvector and related service components. Use persistent volumes and secure API key management.

Getting Started

- Deploy Cognee and its data services.

- Configure model provider credentials.

- Ingest documents or datasets.

- Validate retrieval and graph quality on real queries.

Full Setup Guide

Follow this full walkthrough: Cognee Self-Host Guide.